The MX nomenclature given in the previous paragraph in the local sense implies strict regulation of the MX SI used in high-precision laboratory measurements and the metrological certification of other SI.

In technical measurements, when it is not intended to isolate random and systematic components, when the SI dynamic error is not significant, when influencing (destabilizing) factors, etc. are not taken into account, it is possible to use coarser standardization. assignment of a certain accuracy class to SI according to GOST 8.401–80.

Accuracy class Is a generalized MX defining various SI properties. For example, for indicating electrical measuring instruments, the accuracy class, in addition to the basic error, also includes a variation in readings, and for measures of electrical quantities, the instability value (percentage change in the value of the measure during the year). SI accuracy class already includes systematic and random errors. However, it is not a direct characteristic of the accuracy of measurements performed using these SIs, since the measurement accuracy also depends on the measurement method, SI interaction with the object, measurement conditions, etc.

In particular, to measure a value with an accuracy of 1%, it is not enough to choose a SI with an error of 1%. The selected SI should have a much smaller error, since at least one more error of the method must be taken into account.

Due to the great diversity of both the SI itself and their MX, GOST 8.401–80 establishes several methods for assigning accuracy classes.

In this case, the following provisions are laid in the foundation:

When determining the accuracy class, they primarily normalize the limits of the permissible basic error δDOS. The limits of permissible additional error are set in the form of a fractional (multiple) value [δDOS].

Accuracy classes are assigned to SI during their development according to the results of state acceptance tests. If SIs are designed to measure the same physical quantity, but in different ranges, or to measure different physical quantities, then these SIs can be assigned different accuracy classes both in ranges and in measured physical quantities.

In operation, SIs must comply with these accuracy classes. However, if there are appropriate operational requirements, the accuracy class assigned to the plant may be reduced in operation.

GOST 8.401–80 establishes three types of SI accuracy classes as the main ones:

where A = 1; 1.5; (1.6); 2; 2.5; (3); 4; 5 and 6; values 1.6 and 3 are acceptable but not recommended; n is 1; 0;.1;.2 ;. ;

SI accuracy classes expressed in terms of absolute errors are denoted by capital letters of the Latin alphabet or Roman numerals. over, the farther the letter from the beginning of the alphabet, the greater the value of the permissible absolute error. For example, a class C SI is more accurate than a class M SI, that is, this number is a symbol and does not determine the value of the error.

The accuracy class through the relative SI error is assigned in two ways.

- If the SI error has mainly a multiplicative component, then the limits of the allowable basic relative error are established by the formula

q

So indicate the accuracy classes of AC bridges, electricity meters, voltage dividers, measuring transformers, etc.

- If SI have both multiplicative and additive components, then the accuracy class is denoted by two digits corresponding to the values c and d of the formula:

Here c and d are also expressed in terms of A. over, as a rule, c > d. For example, an accuracy class of 0.02 / 0.01 means that c = 0.02, and d = 0.01, i.e., the reduced value of the relative error at the beginning of the measurement range γn = 0.02%, and by the end. γto = 0.01%.

In addition, GOST 22261-94 establishes the limits of the permissible basic error in the form of relative error expressed in decibels (dB):

where A ’= 10 when measuring energy quantities (power, energy, energy density); A ’= 20 when measuring power electromagnetic quantities (voltage, current, field strength).

It should be borne in mind that if two devices have different sensitivities

S1 =.100dB / W and S2 =.95 dB / W, then the sensitivity value of the second SR is higher than the first, since 95>.100.

The most widespread (especially for analogue SI) is the normalization of the accuracy class according to the given error:

The symbol of the accuracy class in this case depends on the normalizing value of xN, i.e. from the SI scale.

If xN is presented in units of the measured quantity, then the accuracy class is indicated by a number that coincides with the limit of the permissible reduced error. For example, class 1.5 means that γ = 1.5%.

If xN. the length of the scale (for example, ammeters), then class 1.5 means that γ = 1.5% of the length of the scale.

Comparison of the methods for expressing errors allows us to state some considerations.

With the known class of accuracy of SI, expressed in terms of the reduced error γ and sensitivity S (the ratio of the length of the scale of the device to its measuring range), the absolute error of SI will be

and relative at x, respectively,

When the recording form, the absolute error has the form:

(3)

The calculated coefficients c and d are rounded off to those adopted near A, and their relationship with the accuracy class for the given error γ is given in the following table:

Table of correlation of accuracy classes γ and c / d coefficients

Table of formulas for calculating errors and designating SI accuracy classes

It can be seen from the relative error formula δ = Δ / x that its value grows inversely with x and changes in hyperbole, i.e., the relative error is equal to SI class δ only at the last point of the scale (x = xto. As x → 0, the quantity δ → ∞. When the measured value decreases to xmin relative error reaches 100%. This value of the measured value is called the sensitivity threshold.

Summarizing the foregoing, it should be said that if the accuracy class of the SI is established by the largest permissible reduced value of the error, and to estimate the error of a particular measurement, it is necessary to know the value of the absolute or relative error at this point, then in this case the choice of SI, for example, class 1 (γ = 1 %) for measurements with a relative error of ± 1% will be correct if the upper limit xN SI is equal to the measured value x value. In other cases, the relative measurement error must be determined by the formula

(4)

Example. The reading on the scale of the device with measurement limits of 0. 50 A and a uniform scale was 25A. Neglecting other types of measurement errors, evaluate the limits of the permissible absolute error of this reference when using different SI accuracy classes: 0.02 / 0.01; (0.5 in a circle) and 0.5.

Video: What Do Screwdriver Divisions Mean

Did not find what you were looking for? Use the search:

Rationing of errors of measuring instruments

The normalization of metrological characteristics of measuring instruments consists in establishing boundaries for deviations of the real values of the parameters of measuring instruments from their nominal values.

Each instrument is assigned some nominal characteristics. The actual characteristics of the measuring instruments do not coincide with the nominal ones, which determines their errors.

Usually the normative value is taken equal to:

- to the larger of the measurement limits if the zero mark is located on the edge or outside the measuring range;

- the sum of the modules of the measurement limits, if the zero mark is located within the measuring range;

- the length of the scale or part thereof, corresponding to the measuring range, if the scale is substantially uneven (for example, with an ohmmeter);

- the nominal value of the measured value, if one is installed (for example, with a frequency meter with a nominal value of 50 Hz);

- the modulus of the difference of the measurement limits, if a scale with a conditional zero (for example, for temperature) is adopted, etc.

Most often, the upper limit of the measurements of this measuring instrument is taken as the normative value.

Deviations of the parameters of measuring instruments from their nominal values, causing measurement error, cannot be specified unambiguously, therefore, the maximum permissible values must be set for them.

The specified standardization is a guarantee of interchangeability of measuring instruments.

The standardization of errors of measuring instruments is to establish the limit of permissible error.

This limit is understood as the largest (without taking into account the sign) error of the measuring instrument at which it can be recognized as fit and approved for use.

The approach to the standardization of errors of measuring instruments is as follows:

- as norms indicate the limits of permissible errors, including both systematic and random components;

- separately, all the properties of measuring instruments, affecting their accuracy, are standardized.

The standard establishes a number of margins of error. The establishment of accuracy classes for measuring instruments serves the same purpose.

Accuracy classes of measuring instruments

The accuracy class is a generalized SI characteristic expressed by the limits of the permissible values of its primary and secondary errors, as well as other characteristics that affect accuracy. The accuracy class is not a direct assessment of the accuracy of measurements performed by this SI, since the error also depends on a number of factors: the measurement method, measurement conditions, etc. The accuracy class only allows one to judge the extent to which the SI error of a given type lies. General provisions for dividing measuring instruments by accuracy class are established by GOST 8.401–80.

The limits of permissible basic error determined by the accuracy class are the interval in which the value of the basic SI error is located.

SI accuracy classes are set in standards or specifications. A measuring instrument may have two or more accuracy classes. For example, if he has two or more measurement ranges of the same physical quantity, he can be assigned two or more accuracy classes. Instruments designed to measure several physical quantities can also have different accuracy classes for each measured quantity.

The limits of permissible primary and secondary errors are expressed in the form of reduced, relative or absolute errors. The choice of the presentation form depends on the nature of the variation in the errors within the measurement range, as well as on the conditions of use and purpose of the SI.

The limits of permissible absolute basic error are set according to one of the formulas: or, where x is the value of the measured value or the number of divisions, counted on a scale; a, b are positive numbers independent of x. The first formula describes a purely additive error, and the second describes the sum of the additive and multiplicative errors.

In the technical documentation, accuracy classes established as absolute errors indicate, for example, " Accuracy class M", and on the device. the letter "M". For designation, capital letters of the Latin alphabet or Roman numerals are used, and the smaller margins of error should correspond to the letters closer to the beginning of the alphabet, or smaller numbers. The permissible reduced basic error limits are determined by the formula, where xN. normalizing value expressed in the same units as; p is an abstract positive number selected from a number of values:

X valueN is set equal to the larger of the measurement limits (or modules) for a SI with a uniform, almost uniform, or power-law scale and for measuring transducers for which a zero value of the output signal is on the edge or outside the measuring range. For SI, the scale of which has a conditional zero, it is equal to the modulus of the difference of the measurement limits.

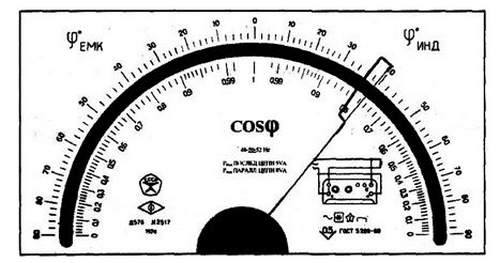

For instruments with a substantially non-uniform scale xN taken equal to the entire length of the scale or part thereof, corresponding to the measurement range. In this case, the limits of absolute error are expressed, like the length of the scale, in units of length, and on the measuring instrument the accuracy class is conventionally indicated, for example, in the form of an icon, where 0.5 is the value of the number p (Fig. 3.1).

In the remaining cases considered, the accuracy class is indicated by a specific number p, for example, 1.5. The designation is applied to the dial, flap or case of the device (Fig. 3.2).

In the event that the absolute error is specified by the formula, the limits of the permissible relative basic error

where c, d are abstract positive numbers selected from the series:;. the largest (modulo) of the measurement limits. When using formula 3.1, the accuracy class is denoted as "0.02 / 0.01", where the numerator is the specific value of the number c, the denominator is the number d (Fig. 3.3).

The limits of permissible relative basic error are determined by the formula if. The value of the constant number q is set in the same way as the value of the number p. The accuracy class for the device is indicated in the form, where 0.5 is the specific value of q (Fig. 3.4).

SI standards and specifications specify the minimum value of x, starting with which the accepted method of expressing the limits of permissible relative error is applicable. X ratiok/ x is called the dynamic range of measurement.

The construction rules and examples of designation of accuracy classes in the documentation and on measuring instruments are given in table 3.1.

Accuracy class. the main metrological characteristic of the device, which determines the acceptable values of the main and additional errors that affect the measurement accuracy.

The error can be normalized, in particular, in relation to:

- measurement result (relative error). In this case, according to GOST 8.401-80 (instead of GOST 13600-68), the digital designation of the accuracy class (in percent) is in a circle.

- the length (upper limit) of the scale of the device (according to the given error)

For dial gauges, it is customary to indicate the accuracy class, written in the form of a number, for example, 0.05 or 4.0. This number gives the maximum possible error of the device, expressed as a percentage of the largest value measured in this range of the device. So, for a voltmeter operating in the measuring range 0. 30 V, accuracy class 1.0 determines that the indicated error when the arrow is positioned anywhere on the scale does not exceed 0.3 V. Accordingly, the mean square deviation s of the device is 0.1 V.

The relative error of the result obtained using the indicated voltmeter depends on the value of the measured voltage, becoming unacceptably high for low voltages. When measuring voltage of 0.5 V, the error will be 60%. As a result, such a device is not suitable for studying processes in which the voltage changes by 0.1. 0.5 V.

Usually the price of the smallest scale division of the pointer device is consistent with the error of the device itself. If the accuracy class of the device used is unknown, the error s of the device is always taken as half the price of its smallest division. It is clear that when reading readings from the scale, it is impractical to try to determine the fraction of division, since the measurement result from this will not become more accurate.

It should be borne in mind that the concept of accuracy class is found in various fields of technology. So in machine tool industry there is the concept of the accuracy class of a metal cutting machine, the accuracy class of EDM machines (according to GOST 20551).

Accuracy class designations can be in the form of capital letters of the Latin alphabet, Roman numerals and Arabic numerals with the addition of conventional characters. If the accuracy class is indicated by Latin letters, then the accuracy class is determined by the limits of absolute error. If the accuracy class is indicated by Arabic numerals without conventional signs, then the accuracy class is determined by the limits of the given error and the largest modulo of the measurement limits is used as the normalizing value. If the accuracy class is indicated by Arabic numerals with a tick, then the accuracy class is determined by the limits of the given error, but the scale length is used as the normalizing value. If the accuracy class is indicated by Roman numerals, then the accuracy class is determined by the limits of relative error.

Devices with an accuracy class of 0.5 (0.2) begin to work in the class of 5% load. and 0.5s (0.2s) from 1% load.